Difference between mutual exclusion and synchronization

Introduction

Welcome to a new operating systems post. Today, we are going to clarify some terms frequently used in concurrency and operating systems design. You have probably heard of the following terms…

- Processes and threads

- Shared memory and resources

- Race conditions

- Mutual exclusion

- Critical section or critical region

- Synchronization primitives or constructs

- Locks and spin locks

- Mutex and semaphores

- Monitors and condition variables

- etc.

How does that relate to our main question: mutual exclusion vs synchronization ? Let us go through the list and see the pattern…

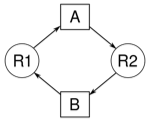

- Given a multitasking OS (i.e. processes running at the same time) or a multithreaded application (i.e. threads running at the same time)

- We need to share data or resources between processes or threads. Why? there are many benefits for sharing data. For example, sharing memory can be used to facilitate communication between processes

- Sharing is important but undesired side effects can happen if simultaneous access is not well orchestrated. Such bad side effects are called race conditions. We will explain the technical meaning of a race condition later. So what is the solution then?

- Implement mutual exclusion between concurrent threads by allowing only one thread at a time to have access to the shared resource. Technically speaking, only one thread at a time is allowed to be executing code in its critical section. What is a critical section ?

- Critical section is the part of code where a thread tries to access the shared resource. It is that simple!

- The techniques to prevent race conditions, achieve mutual exclusion, prevent multiple threads from entering their critical sections at the same time is called synchronization. How synchronization works ?

- Synchronization works by implementing synchronization primitives. What are these primitives?

- Here are few examples: lock, mutex, semaphore, monitor, etc. Implementation details for each one of these primitives is beyond the scope of this post

Now we know what synchronization means and why is it needed. In the next sections, we are going to define each term and see some examples.

Race condition

As we indicated earlier, a race condition happens when two or more threads attempt to access and modify a shared resource at the same time. The order in which threads access shared resources is out of thread control. The operating system scheduling algorithm does that. That is why side effects occur and that is why debugging threading bugs is often frustrating because there is no deterministic way to reproduce the issue. To be more specific, a race condition occurs when one thread checks a value then acts based on that. In the mean time, another thread modifies that value and the final result is out of sync. In a race condition, there is no reliable way to know what will happen next.

Let us take an example.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

// (1) A bank account is initialized // with a balance of 10 dollars balance = 10 // (2) Assume the withdraw(5) is called first withdraw(x) { // Subtract 5 dollars from the balance y = balance - x // Before a withdraw there should be sufficient funds if (y >= 0) // This is the check { // (3) Assume the deposit thread was called with deposit(5) // meaning the balance is gonna be 15 not 10 any more // (4) y is already 10 - 5 = 5 so balance will be 5 which is // wrong, it should be 10 instead because we deposited 5 balance = y // This is the act } } deposit(x) { balance = balance + x } |

Mutual exclusion

To clarify mutual exclusion even more, let us take a real life example: a public rest room ! only one person at a time can occupy the rest room. If the room is vacant, a person can occupy it, lock it from the inside, once done he or she unlocks the room so that it can be used by another person in line. The analogy between this real life example and the bank account balance example mentioned earlier is almost an exact match. In both cases we have a shared resource (balance vs room). In both cases, we need a locking system to enforce mutual exclusion (physical door lock vs software lock as we will see later).

Critical section or region

We said a critical section or critical region is the part of thread or process code where a shared resource is accessed and modified. In the previous bank account balance example, we have two threads sharing a balance variable. The critical section for the deposit thread is the line:

|

1 |

balance = balance + x |

and the critical section for the withdraw thread is the line:

|

1 |

balance = y |

To protect the critical section, we use a synchronization technique. Let us discuss a few…

Spin locks

In the previous example, before accessing the balance, each thread checks a flag. If the flag is reset, the thread sets the flag and continue executing code in critical section. If the flag is set (meaning locked), the thread enters a loop (spinning). While a thread is waiting, it continuously checks the flag until it gets a chance to execute. Of course, this synchronization technique can have a performance impact because the waiting thread is wasting CPU cycles and performing any useful work.

Let us take an example…

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

// available == 0 means balance variable // is locked by another thread so keep waiting // otherwise proceed // this means while not available wait while (available == 0) { //Do nothing just spin } deposit(x) { balance = balance + x // When done flag it as available available = 1 } |

Since the withdraw thread accesses the shared resource (balance) as well, locking is also required in a similar way. There are other synchronization primitives out there. Let us now talk about mutex and semaphores.

Mutex vs semaphore

You may check the following article for more details, for now let us just copy/paste the what we need…

Semaphores are also used to synchronize threads of execution so what is the difference between a mutex and a semaphore ? Let us quickly mention what is a semaphore? and do the comparison. A semaphore is another high level synchronization primitive. It restricts the number of simultaneous threads to a shared resource up to a maximum number known as the semaphore count. When a thread requests to access a resource, the semaphore counter is decremented. When the thread finishes, the counter is incremented. In that sense, a mutex is nothing but a binary semaphore meaning there can be only one thread at a time acquiring the mutex. The toilet example when comparing mutex to semaphores is very popular. A mutex is like a key to a toilet. One person at a time can have the key and occupy the toilet. When finished, he gives the key to the next person waiting in line. On the other hand, a semaphore is like having a number of identical toilet keys for several available toilets. The number of keys represents the semaphore count. The count is decremented whenever people come in. When all toilets are occupied, the count becomes zero so no one can use any toilets. If someone leaves, the count is incremented and the next person in line can use that key and so on.

Synchronization is a big topic, there is a lot to talk about, for example monitors and conditions variables. This was just an introduction, a separate post is needed to explain other synchronization constructs. Will stop here and summarize…

Summary

Here are the main points discussed in this article…

| Main points |

|---|

| Concurrent applications share resources (ex. for communication) |

| Concurrent access can lead to race conditions |

| Mutual exclusion can prevent race conditions by restricting access to shared resources using synchronization |

| Synchronization primitives are used to protect thread critical section in which the shared resource is accessed. |

| Example synchronization constructs: lock, mutex, semaphore, monitor |

Thanks for visiting. Please use the comments section for questions and feedback.

References

More from my site

About Author

Mohammed Abualrob

Software Engineer @ Cisco